Scoring of a DevOps question

The scoring of DevOps questions is done by the following method:

- Each candidate’s submission is auto-evaluated against the added validation script.

- The system outputs a 0 or 1 for each test case and normalizes the score based on the total score.

- A candidate can submit multiple times. In such cases, each of their submissions is auto-evaluated against the added test cases.

- The best submission amongst them is then considered for scoring the candidate.

Format of scoring

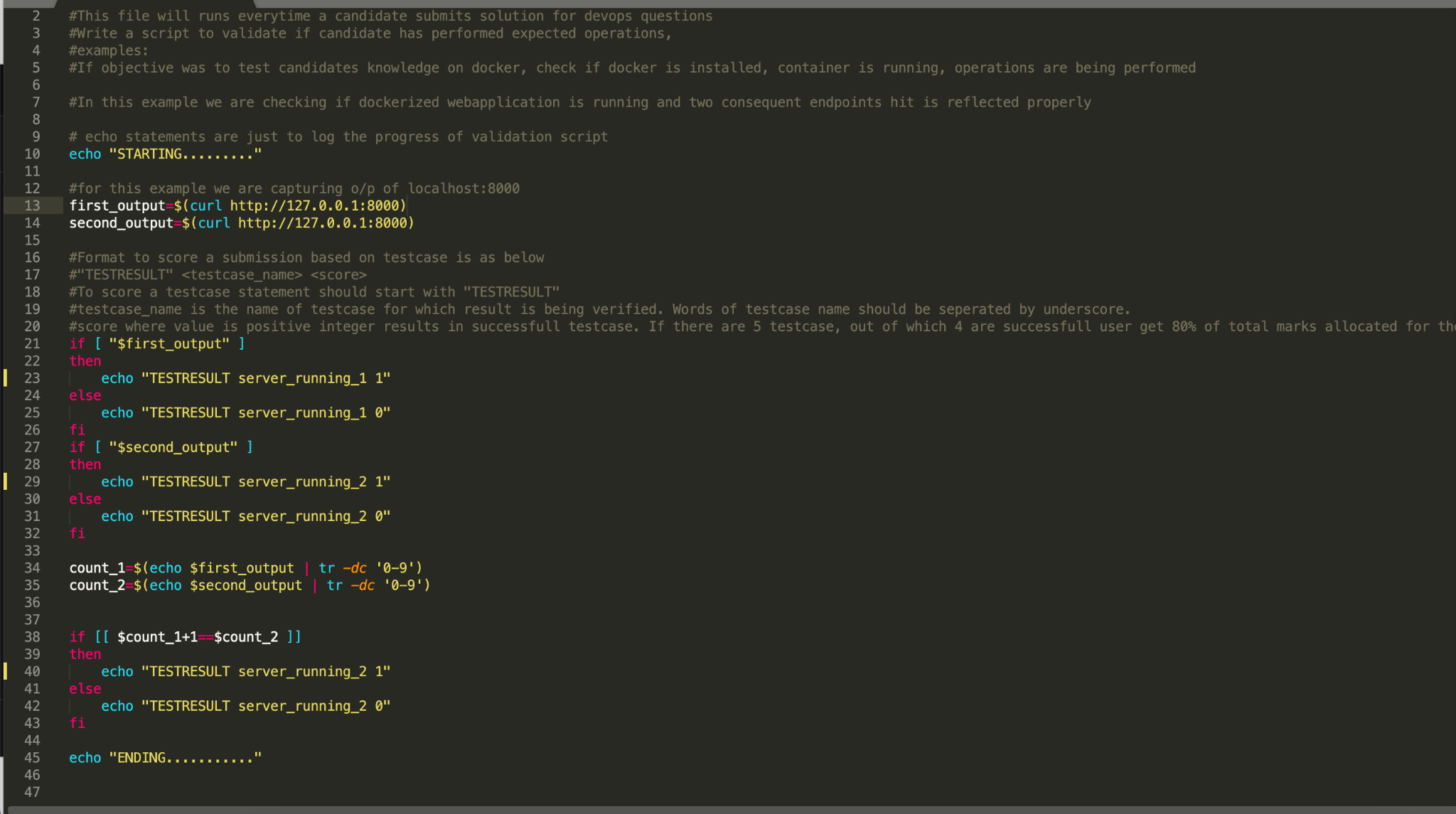

The format to score a submission based on a test case is as follows: "TESTRESULT" <testcase_name> <score>

Explanation

- To score a test case, the statement should start with "TESTRESULT"

- <testcase_name> is the name of the test case for which the result is being verified. Words in a test case name should be separated by an underscore.

- <score> is the score where a positive integer value results in a successful test case. For example, there are 5 test cases out of which 4 are successful, in this case, the user will get 80% of the total marks allocated for the question.

For example, in this case, the validation script checks if the docker web app is running at specific endpoints and scoring accordingly. Refer to the given image to understand the format of scoring.

NOTE: You can access the sample scripts from within the question creation interface as well.